For Teachers: Datasets for education and for fun

These datasets can be used as the basis for a joint project involving, for example, an art and an astronomy or physics class. Consider combining classes in the two subjects for a couple of weeks and discussing the making of astronomical images both from the purely aesthetic viewpoint and also from a rigid scientific point of view. Computer classes will appreciate seeing direct applications of techniques in a visual field like astronomy.

Students might employ the same work strategy as a professional outreach group: first by acquiring the raw astronomical data directly from astronomers (the datasets), and then by creating an aesthetically pleasing image and a news release, perhaps for publication in the school magazine or on the school intranet.

A professional outreach group will normally read the original scientific papers and then interview the scientists concerned to find the story that will catch the scientific journalist’s eye. Students could use an original news release as background material and then write an article that is accessible to students in other disciplines. The article should of course be illustrated by an image that they have produced themselves. Alternatively the class could divide into several different groups, each group producing an article for a single collected issue.

Note: The raw image files are in 16-bit format, and are therefore quite large. Depending on the internet connection at your school, it might be wise to download the images beforehand.

Some more detailed questions are given below, for students to

consider as they work on the images. Feel absolutely free to edit

the questions as you see fit (pun intended :-)). They are intended

for use after students have completed the procedures described

in the "step-by-step"

guide to astronomical image processing.

Questions

TECHNICAL ISSUES

Open a reasonably well balanced image in Photoshop, and answer

these questions:

Losing information

The problem of losing information during the process of image

analysis is an ever present one.

Experiment! Do not save the image during the following experiments

as they might greatly reduce the quality of the image.

- Try to open the levels correction layer for all the original layers one by one and set the black and white sliders close to each other for each layer. This is an extreme example of an image where too much information has been removed while truncating the histogram.

- Do not save this image - revert to the pretty one you started out with, either by choosing "revert" under the file menu or by picking the appropriate step in the history window.

Colour blending in the additive colour mixing mode

Look at the coloured layers individually to get an impression

of each one. This can be done by making the other layers invisible,

simply by clicking on the eye to the left of the layer in the

layer window. Then try to view just the red and green layers together.

- What colour results from mixing red and green?

- Try the same with the other two possible combinations.

- What is the result of mixing red and blue?

- What is the result of mixing blue and green?

Assigning "natural" colours to the different exposures:

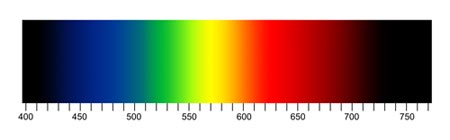

- Try changing the hue of one or more of the layers so that

they represent the colour that corresponds to the wavelength

where the filters used to acquire the image have their maximum

more closely. Use this figure showing the relationship between

colour and wavelength for the conversion.

- Did the quality of your image improve? Why / why not?

DISCUSSION SUBJECTS

Imagine that you are the author of an "International

charter of proper procedure for astronomical image analysis".

Which rules would you include?

- Is it reasonable to assign, for instance, a red colour to an infrared exposure and a deep purple to an ultraviolet exposure?

- Is it reasonable to assign a totally different colour, for

instance, bright green to the 631 oxygen line, just for purely

aesthetic or artistic reasons?

When an image is composed of partly two different exposures made in narrow band filters at 656 nm (H-Alpha) and at 658 nm ([NII] - nitrogen), it is more than tempting to assign radically different colours to the two different exposures, simply to be able to distinguish between the two different elements and their location in, say, a planetary nebula.

However, in a strict approach to image processing, the goal is to produce an image that approximates the sight a future space traveller would see when approaching the object from afar - and although the human eye is a good detector, a two nanometer wavelength difference is not enough for the human eye to distinguish between nitrogen and oxygen.

By how much is it reasonable the let the hues differ of two exposures taken at almost the same wavelength? - Is there any reason to produce pretty looking images without

describing the physical processes that produce the radiation?

Is it possible to enjoy an image showing a nebulous envelope of hydrogen made at the wavelength corresponding to the H-alpha line without knowing anything about atomic transitions? - What would you do with a dataset consisting of three different

infrared exposures at say 814 nm, 1000 nm and 1200 nm?